The Internet Is Being Rewritten — And Tech Enthusiasts Should Pay Attention

Estimated Read Time: 12 min

You’ve watched AI eat software. You’ve seen it write code, generate images, and pass bar exams. But there’s one thing most people haven’t noticed yet — it’s quietly rewriting how the entire web gets discovered. And if you build, create, or publish anything online, this is the most important shift you haven’t fully prepared for.

What If Google Wasn’t the Gatekeeper Anymore?

Here’s a thought experiment for you.

You open your laptop. You need to find the best database solution for a high-throughput application. In 2022, you’d Google it, scan a few Stack Overflow threads, open six tabs, read two blog posts, and eventually form an opinion.

In 2026? You describe the problem to an AI. It reads dozens of sources simultaneously, synthesises the technical tradeoffs, and gives you a structured answer with citations. You never visit a single website.

This isn’t a future scenario. This is Tuesday.

ChatGPT now has over 800 million weekly active users. Google’s AI Mode — powered by Gemini 2.5 — conducts dozens of sub-searches simultaneously for complex queries, composes an answer, and surfaces sources beneath it. Over 77% of mobile searches now end without a single click to any website.

The web isn’t broken. But the contract between content creators and the people who find them just changed — fundamentally, and fast.

The Architecture of the Old Web vs. the New One

If you think about the old web like a highway system, Google was the GPS. It told you which roads existed, which were fastest, and pointed you toward the destination. You still had to drive.

The new web is more like a food delivery app. You describe what you want. The app picks the restaurant, places the order, and brings it to you. You never interact with the restaurant directly — unless you specifically ask to.

For content creators and developers, this flips the entire model:

| Old SEO | New Reality (2026) |

|---|---|

| Rank #1 for a keyword | Be cited as a trusted source by AI |

| Drive clicks to your site | Feed AI with extractable, reliable answers |

| Optimise for Googlebot | Optimise for LLM crawlers too |

| Build backlinks for authority | Build brand signals across platforms |

| Target keywords | Target semantic intent + entities |

The transition isn’t clean. Both systems run in parallel right now. Traditional SEO still matters. But if you’re only playing the old game, you’re already losing ground.

Understanding GEO: The New Discipline Nobody Named Well

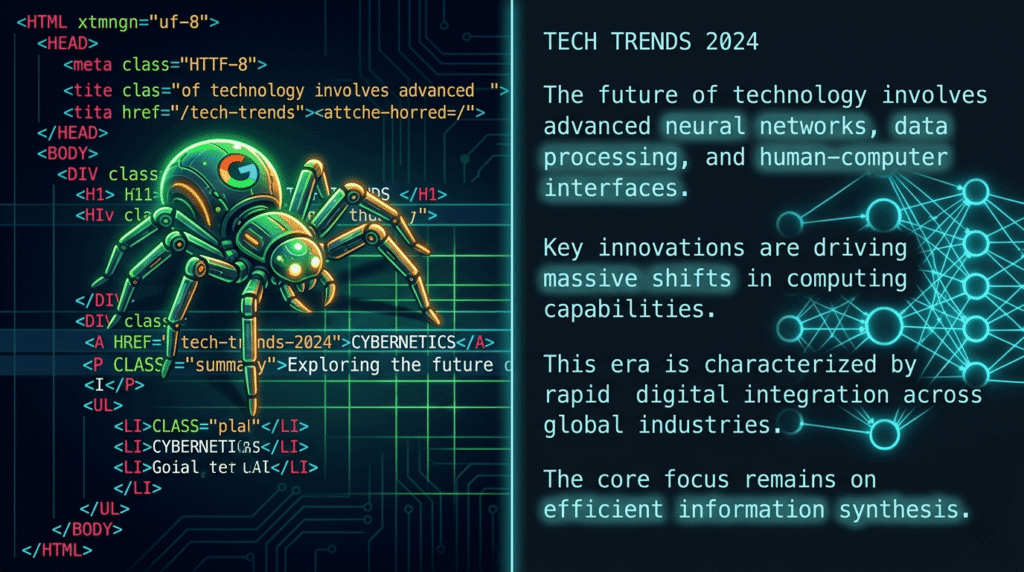

Generative Engine Optimisation (GEO) is the practice of making your content understandable, trustworthy, and citable by Large Language Models — not just Google.

Here’s what makes GEO technically interesting for those of us who like to understand systems:

LLMs don’t index pages the way Googlebot does. When ChatGPT’s agent visits your site, 46% of the time it loads your page in plain HTML mode — no CSS, no JavaScript, no schema rendering. It’s reading raw markup. That means your content’s signal lives in:

- Clean semantic HTML structure (H1 → H2 → H3 hierarchy)

- The actual prose quality and depth of your text

- Structured data in your

<head>(JSON-LD schema) - Page load speed (63% of AI agent visits bounce immediately on errors)

- Your URL slug and title tag matching the query intent

A practical example: A page titled /blog/post-12345 with the content buried in a JavaScript-rendered component is effectively invisible to many AI crawlers. A page at /how-postgresql-handles-concurrent-writes with clean semantic HTML and a well-structured FAQ section is gold.

The llms.txt File — A New Web Standard Worth Watching

Similar to robots.txt telling Googlebot what to crawl, llms.txt is an emerging (and controversial) web standard that tells LLM crawlers how to navigate your content. Adoption is still tiny — under 0.1% of top sites — but it’s a fascinating signal of where web infrastructure is heading.

Worth adding to your site? Probably yes, even if just as a statement of intent. Worth betting your whole strategy on? Absolutely not yet.

The Zero-Click Problem — And Why It’s More Nuanced Than You Think

Every tech publication is panicking about zero-click searches. Let’s actually think through this properly.

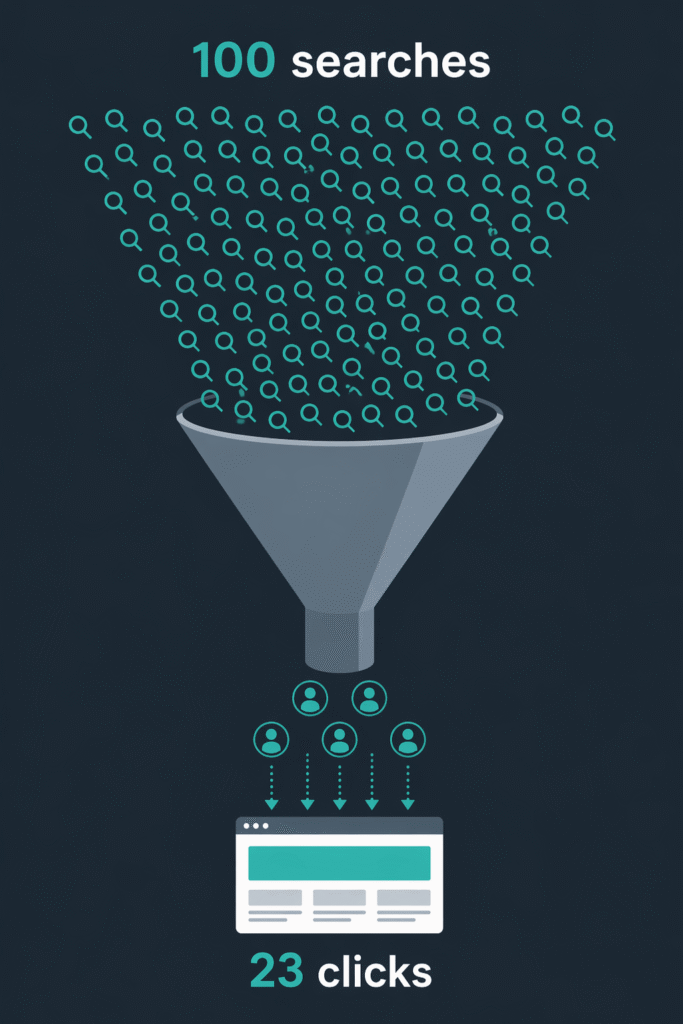

The stat everyone quotes: Over 60% of Google searches now end without a click.

What that number hides: Not all clicks are equal, and not all zero-clicks are losses.

Zero-click wins for AI: informational, top-of-funnel queries. “What is a hash collision?” “Difference between REST and GraphQL.” “What does HTTP 429 mean?” These were never great traffic sources — visitors bounced quickly and didn’t convert.

Zero-click is bad for: mid-funnel research queries where AI is now absorbing comparison intent. “Best vector database for production,” “Is Supabase ready for enterprise?” — these used to drive meaningful traffic to well-researched articles. Now they feed AI answers.

What clicks remain are much higher intent. The person who clicks through past an AI answer is motivated. They want depth, specificity, a person to trust, or a product to buy. If your content is shallow, you won’t survive. If your content is genuinely expert, you’ll convert better than before.

For tech enthusiasts building or writing: Start thinking about which queries you’re targeting. Are they informational (AI will eat them) or are they specific, experiential, or decision-stage (you can still win these)?

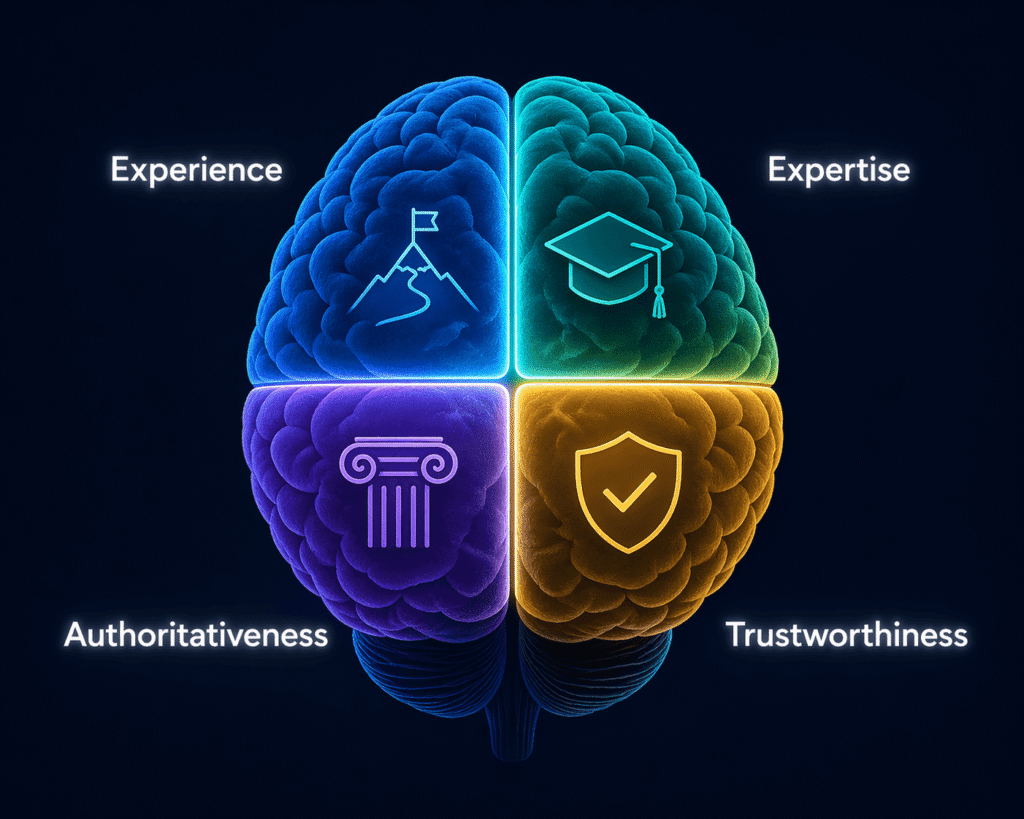

E-E-A-T: Why This Is the Most Technically Interesting SEO Concept Right Now

Experience. Expertise. Authoritativeness. Trustworthiness.

Google’s quality framework has existed since 2014. But in 2026, it has become the core signal that separates human-generated expertise from AI-generated content noise.

Here’s the technical reason this matters now: AI can aggregate knowledge, but it cannot generate experience.

An AI can write “here’s how to optimise PostgreSQL query performance.” It cannot write “here’s the specific mistake I made in our sharded Postgres setup at 10M rows/day that caused a 3-second p99 latency spike — and the exact index change that fixed it in 40 minutes.”

That second version contains:

- A specific context (scale, architecture)

- A failure mode (with measurable symptoms)

- A resolution (with timeline and mechanism)

This is content AI cannot synthesise. It requires a human who was there.

E-E-A-T signals Google and LLMs are now reading:

- Author bylines with verifiable credentials and social presence

- First-person experiential writing with specific, falsifiable claims

- External citations and references to your work (not just your backlinks)

- User reviews and UGC mentioning your brand

- Community discussions referencing your content (Reddit, HN, GitHub issues)

Brands are now 6.5x more likely to be cited by AI through third-party sources than through their own domain. What others say about you matters more than what you say about yourself.

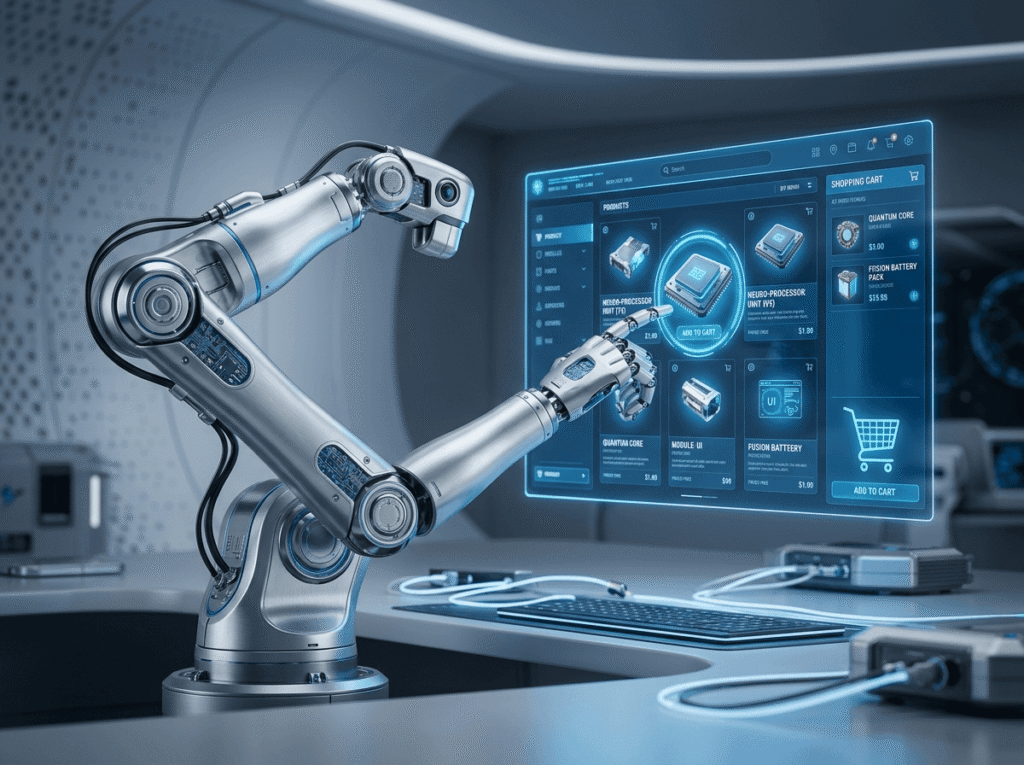

Agentic AI: The Shift That Changes Everything in 12–24 Months

This is the part most SEO content glosses over — and it’s the most consequential technical development to understand.

Agentic AI isn’t AI that answers questions. It’s AI that takes actions.

OpenAI has already open-sourced an Agentic Commerce Protocol. Shopify merchants can enable AI-assisted checkout with a single line of code. Gartner projects that by 2028, 90% of B2B buying will be AI-agent intermediated — over $15 trillion of spend flowing through systems that evaluate content, compare options, and make recommendations without a human in the loop.

Think about what that means structurally for your content or product:

An AI agent shopping for the best monitoring tool for a SRE team doesn’t read your blog post. It parses your structured data. It checks your pricing page schema. It reads your docs. It compares your feature list against a requirements spec. It may even test your free tier. All without a human present.

If your product or content isn’t machine-readable at a structural level, you’re invisible to this buyer.

For developers and tech builders: this is infrastructure-level thinking, not marketing-level thinking. Your robots.txt, your schema markup, your API documentation structure, your page load performance — these are no longer just technical SEO details. They’re how AI agents decide whether to recommend you.

What Actually Works in 2026 (No Vague Advice)

Here’s the practical breakdown — specific, actionable, technically grounded.

1. Write content AI can’t replicate

Original benchmarks. Real architecture decisions. Postmortems. Specific debugging stories. If you could generate it with a prompt, it has no differentiation.

2. Make your HTML readable by machines

- Clean semantic structure:

<article>,<section>,<h1>–<h3>hierarchy - JSON-LD schema for FAQs, how-tos, and articles

- No content hidden behind JavaScript on load

- Fast TTFB (AI crawlers bounce on slow servers — same as users)

3. Build your signal outside your own domain

Participate in Reddit threads. Answer questions on Hacker News. Publish on GitHub. Get cited in industry newsletters. AI systems read these platforms and use them to assess your credibility.

4. Target complex, layered queries

Google’s AI Mode runs queries that are 2–3x longer than traditional searches. Content that answers compound, nuanced questions (“how does X handle Y under Z conditions”) wins citations over thin answers to simple questions.

5. Think multi-platform, not just Google

ChatGPT, Perplexity, Gemini, LinkedIn, YouTube — discovery is fragmented. Over 30% of all referral traffic from ChatGPT goes to just 10 domains. If you’re not building presence across these surfaces, you’re depending entirely on a single channel that’s shrinking.

6. Treat your technical SEO as AI infrastructure

robots.txtis more important than ever — AI crawlers respect it- Add

llms.txtas a signal of intent - Ensure clean 200 responses, minimal redirects, no CAPTCHAs on crawlable content

- Use

FAQPageschema — AI search citations heavily favour FAQ-structured content

The Bigger Picture

Search is undergoing a platform-level shift — from a directory to an operating system. Google, ChatGPT, and Perplexity aren’t competing to show you links anymore. They’re competing to resolve your intent directly.

For tech enthusiasts, this is genuinely fascinating to watch. The infrastructure of how humans find information online is being rebuilt in real time. The winners won’t be those who game the algorithm — they’ll be those who create content so technically accurate, so experientially rich, and so structurally sound that both humans and AI systems have no choice but to trust them.

The playbook isn’t complicated. But it does require something that was always supposed to be the point: actually knowing what you’re talking about.

Found this useful? Share it with someone who’s still writing for the 2019 web.